Shutong Wu

PhD Student in Computer Science

I'm a PhD candidate at the University of Rochester, advised by Prof. Zhen Bai in the Interplay Lab. I build systems where AI agents autonomously create and verify 3D content inside game engines — from the protocol layer between LLMs and Unity (MCP-Unity, SIGGRAPH Asia 2025), to multi-agent pipelines for scene generation, to trace-driven optimization that makes these systems reliable.

Recent Updates

-

Apr 2026Ph.D -> Ph.D. Candidate.

-

Dec 2025Volunteered at SIGGRAPH and SIGGRAPH Asia 2025!

-

Oct 2025Unity-MCP is accepted at Siggraph Asia 2025 Technical Communication Track.

-

Jul 2025Maintainer and core contributor to the open-source repo Unity-MCP. GitHub

-

May 2025AGen received Best LBW Paper Nomination at AIED 2025. Paper

-

Sep 2024Started PhD at University of Rochester, advised by Prof. Zhen Bai in Interplay Lab.

Research Interests

AI-Driven 3D Authoring

Building multi-agent systems that autonomously author 3D scenes in game engines, from concept generation and asset creation to spatial composition and programmatic verification.

Engine & Runtime Systems

Designing protocol bridges between LLMs and game engines, with focus on state management, reliability infrastructure, and trace-driven optimization for agent-engine interaction.

Mixed Reality

Exploring immersive AR/VR experiences and interaction techniques, including diminished reality for focus enhancement and in-situ 3D authoring in virtual environments.

Analogy & Analogical Learning

Investigating how people generate and understand analogies, leveraging analogical reasoning to develop innovative educational tools and exploring novel computational approaches to analogical thinking.

Publications

MCP-Unity: Protocol-Driven Framework for Interactive 3D Authoring

The technical report for the Unity-MCP Project, including how we design, implement, and evaluate the repository for 3D authoring within the Unity editor.

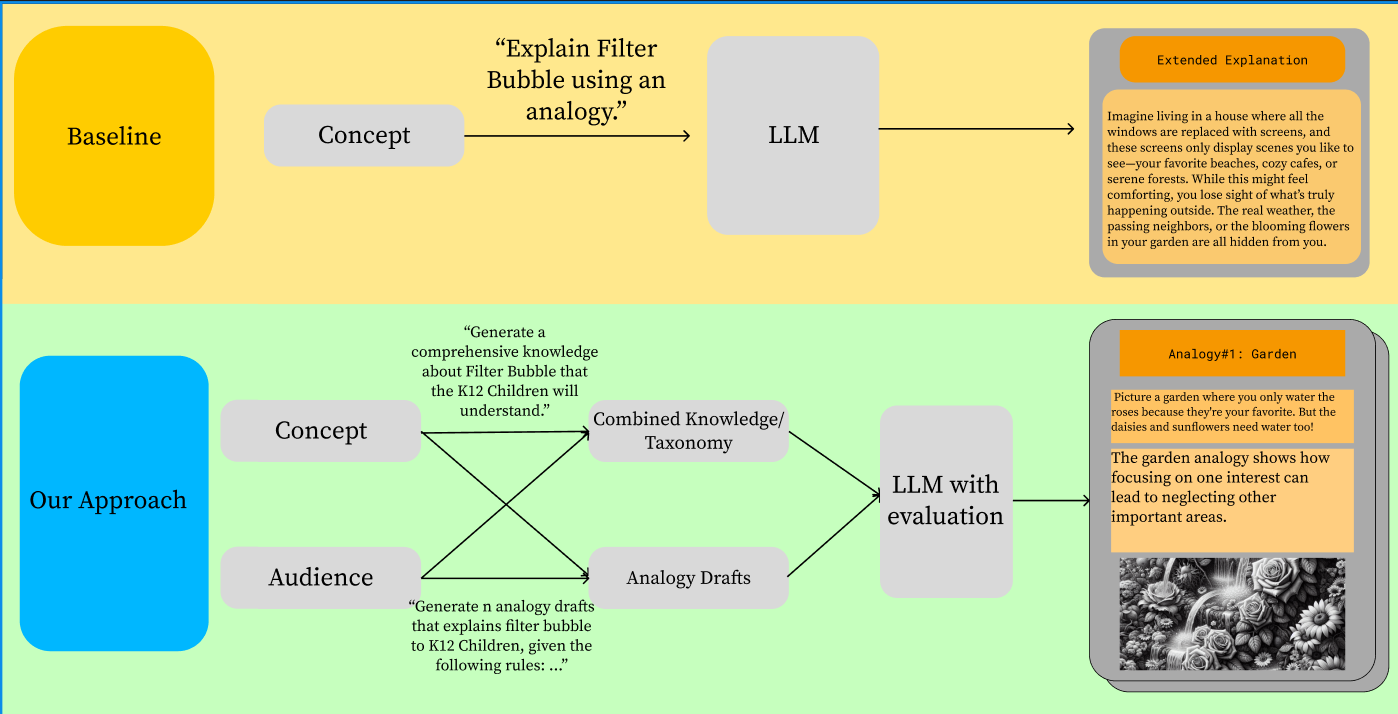

AGen: Personalized Analogy Generation through LLM

An analogy generation platform that creates tailored analogies based on user profiles and education levels, enhancing personalized learning experiences.

Current Projects

Unity-MCP

Core maintainer of Unity-MCP — a protocol bridge that enables AI agents to programmatically control the Unity Editor. Designed and implemented shader/material/VFX tooling, CLI interface, and runtime compilation. Published at SIGGRAPH Asia 2025.

3DGenAgents

A multi-agent runtime for autonomous 3D scene generation in Unity. Orchestrates specialized AI agents (concept art, scene composition, UI, verification) through MCP.

VR-MCP (In Development)

Extending MCP capabilities to VR environments, enabling seamless integration between AI language models and virtual reality development and interaction paradigms.

Diminished Reality for Enhanced Focus

An adaptive Mixed Reality technique that leverages semantic understanding to remove daily objects and improve user focus and productivity in AR environments.

Past Projects

VR Vision Testing (Penn Medicine)

Designed and implemented VR vision tests on Quest 2 platform, providing accessible virtual alternatives to physical vision tests for low-vision patients. Secured two patents for novel VR-based vision testing methodologies.

Side Projects

Learner-web

Interactive web learning experience for my own self-learning journey through the math behind computer graphics.

ShaderWasp

A promptable GLSL/HLSL/Slang shader playground that generates real-time visuals in the browser, inspired by shader lab workflows.

PointWisp (3DGS / PLY Viewer)

A lightweight, static-web PLY and 3DGS viewer/editor/exporter.

Literature Seekers

My personal tool for retrieving papers quickly and finding relevant literature for new research ideas. Currently supports keyword-based search and semantic search, from semantic scholar, arxiv, and github.

Experience

University of Rochester

- Building AI agent infrastructure for autonomous 3D content creation in game engines

- Core maintainer of Unity-MCP (SIGGRAPH Asia 2025) and 3DGenAgents multi-agent runtime

- Advised by Prof. Zhen Bai in the Interplay Lab

- GPA: 4.0/4.0

- Teaching Assistant for AR/VR Interaction, Intro to AI, and Computer Algorithms

Penn Medicine Ophthalmology

- Designed and implemented VR vision tests on Quest 2 platform for low-vision patients

- Secured two patents for novel VR-based vision testing methodologies

- Developed end-to-end VR software solution within 9 months

- Utilized Unity XR, custom shaders, and post-processing techniques

Penn CG Lab

- Collaborated with Prof. Lingjie Liu on NeRF-based research project

- Created Unity animation infrastructure in C# for volumetric scene generation

- Developed C++ plugins to convert SMPL files to FBX animations

ByteDance

- Developed efficiency tools including Overdraw and Mipmap Collector

- Reduced average frame time by 10ms through graphics optimization

- Collaborated with game studios on performance analysis and optimization

NetEase Games

- Developed mobile game features and mechanics

- Optimized game performance and user experience

- Collaborated with cross-functional teams on game development projects

Technical Skills

Programming Languages

Graphics & VR

AI & Agent Systems

Tools & Frameworks

Contact

I'm always interested in discussing research collaborations, new opportunities, or just connecting with fellow researchers in AR/VR and 3D generation.

Office: Wegmans Hall, University of Rochester, Rochester, NY

Lab: Interplay Lab